- What Is AI Security?

- AI’s Role in Modern Cybersecurity

- How Does AI Security Work?

- What to Look for in AI-Based Security Tools

- AI Security Risks

- Why Is the Security of AI Important?

- Benefits of AI Security

- Top AI Security Challenges

- How Can You Implement AI Security in Your Business?

- AI Security Best Practices

Artificial Intelligence (AI) is not just a future idea; it’s changing industries now. It drives innovation and is part of our digital lives. AI enhances customer experiences using smart chatbots. It also strengthens critical infrastructure through predictive analytics. This brings new efficiency and capability. However, rapid integration also creates new vulnerabilities and threats. This leads to a vital field: AI security.

What Is AI Security?

AI security protects AI systems, data, and models from misuse and attacks. It also uses AI to boost cybersecurity. In short, it’s about keeping your AI systems secure, trustworthy, and strong. This way, they won’t be the next target for a cyberattack.

AI security sits at the intersection of cybersecurity, data science, and governance. It secures the AI lifecycle. This includes data collection, training, deployment, and daily use. Applications like chatbots, fraud detectors, and automation agents benefit from this approach.

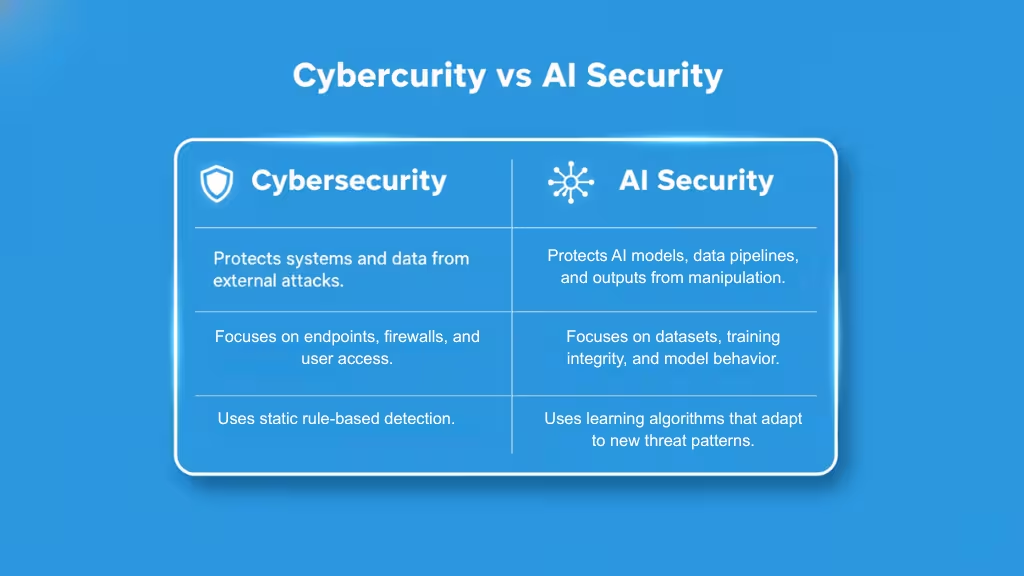

AI Security vs Cybersecurity

Traditional cybersecurity protects networks, endpoints, and cloud systems from attacks. In contrast, AI security safeguards the intelligence layer. This includes the models and data that drive automation and decision-making.

In other words, cybersecurity keeps your systems safe; AI security keeps your AI honest.

AI’s Role in Modern Cybersecurity

Artificial Intelligence has changed how organizations find, study, and tackle cyber threats.

AI models now handle what used to take overworked analysts hours. They can detect patterns, link data, and trigger automated defenses instantly.

But this evolution is not just about speed it’s about strategy. AI allows security teams to move from reactive defense to predictive resilience.

The Rise of SIEM and EDR

To understand AI’s modern role, it helps to start with the tools that prepared the ground for Security Information and Event Management (SIEM) and Endpoint Detection and Response (EDR) systems.

- SIEM systems collect and correlate log data from across your environment’s servers, endpoints, firewalls, and cloud apps, giving visibility into suspicious activities.

- EDR tools track behaviors on individual devices to detect and isolate threats like malware or ransomware in real time.

These systems were powerful, but data overload became their Achilles’ heel. Enterprises generate terabytes of telemetry every day, and human analysts can’t keep up with the volume, velocity, and variety of threats.

That’s where AI-driven analytics changed the game. Machine learning models began to detect anomalies, score risks, and surface the most relevant alerts, transforming static monitoring into intelligent defense.

From Automation to Intelligence

Traditional security automation was rule-based (“if X, then Y”). AI evolved this into context-aware automation: algorithms that learn from patterns, adapt to new attack vectors, and predict risks before they occur.

Example:

A conventional SIEM might flag multiple failed login attempts. An AI-powered system, however, can:

- Detect that the login attempts are from an unusual geography,

- Recognize that the user’s access pattern doesn’t match historical behavior, and

- Automatically trigger identity verification or temporary account suspension all in seconds.

This isn’t just automation; it’s machine intelligence applied to cybersecurity.

AI in Action

Today’s leading cybersecurity ecosystems (like Palo Alto Cortex XDR, IBM QRadar, and Vectra AI) rely on AI in three critical ways:

- Threat Detection:

AI models analyze millions of events to identify patterns humans might miss such as lateral movement, credential misuse, or insider threats. - Anomaly Scoring:

Machine learning assigns contextual risk scores to behaviors and entities, allowing teams to prioritize incidents that truly matter. - Incident Response:

When paired with automation, AI enables systems to quarantine compromised assets, block malicious IPs, or trigger multi-factor authentication without human delay. - Augmented Decision-Making:

Generative AI and LLMs are now entering the SOC (Security Operations Center). The AI acts as a security copilot, accelerating investigation and reducing fatigue.

The Business Impact

For modern enterprises, AI in cybersecurity delivers measurable gains:

- Faster detection and response times (IBM reports up to 70% reduction in MTTR).

- Higher analyst productivity automating repetitive triage and reporting.

- Reduced breach risk and downtime, protecting both brand and customer trust.

The Hidden Risk

However, the same AI that strengthens defenses also empowers attackers. Malicious actors are already using generative models to craft phishing campaigns, automate malware generation, and exploit vulnerabilities faster than ever before.

That’s why AI security must evolve in parallel to protect not just against human attackers, but against AI-powered adversaries.

How Does AI Security Work?

AI security isn’t a single tool it’s a framework of continuous monitoring, protection, and adaptation. Just as AI learns from data, AI security systems learn from threats.

Modern organizations deploy a mix of machine learning, automation, and governance controls to secure every stage of the AI lifecycle from data ingestion to model deployment. The goal: detect risks early, respond automatically, and ensure that every decision your AI makes is trustworthy and verifiable.

1. Threat Detection and Anomaly Scoring

At the heart of AI security lies threat detection the ability to spot unusual patterns in massive streams of data.

AI-driven security platforms analyze billions of events, logins, transactions, API calls, and model queries to identify behavioral anomalies that signal a potential attack.

Unlike rule-based systems that rely on static signatures, AI models use unsupervised and semi-supervised learning to discover hidden threats that traditional tools can’t see.

For example:

- Detecting data drift that hints at poisoning or manipulation.

- Spotting model extraction attempts through unusually similar query patterns.

- Flagging prompt injections by scanning for hidden instructions in user or web inputs.

2. Automation and Response

Detection is only half the battle. The next step is autonomous response where AI acts on insights without waiting for human intervention.

Using reinforcement learning and event correlation, modern AI security platforms can:

- Isolate compromised endpoints, containers, or virtual machines.

- Revoke credentials or trigger multi-factor authentication when suspicious behavior is detected.

- Quarantine corrupted datasets or block malicious API calls automatically.

This automation drastically reduces Mean Time to Response (MTTR) from hours to seconds.

3. Protecting AI Pipelines

Securing AI models requires a unique focus on the AI development pipeline the sequence of steps that prepare, train, and deploy AI systems.

Without oversight, these pipelines are vulnerable to data poisoning, model theft, and prompt injection often before the model even reaches production.

Key protection layers include:

- Data Validation & Provenance – Verifying dataset sources, applying checksums, and tracking lineage to ensure data integrity.

- Model Hardening – Implementing watermarking, differential privacy, or adversarial training to defend against model manipulation.

- Access Control & Encryption – Limiting who can retrain or export models; encrypting weights and embeddings.

- Pipeline Monitoring – Watching for unauthorized changes in training jobs, container registries, or dependency packages.

4. Regular Audits and Updates

AI systems, like humans, drift over time. Data evolves, behavior changes, and models degrade a risk called model drift or concept drift.

That’s why AI security depends on continuous audits and lifecycle management, including:

- Regular model evaluations for bias, accuracy, and integrity.

- Periodic red-teaming to simulate adversarial attacks.

- Patch management for dependencies and frameworks.

- Governance reporting to prove compliance with AI security and privacy regulations (e.g., NIST AI RMF, ISO 42001).

Audits ensure that what your model should do and what it actually does remain aligned. When AI makes decisions at scale, trust must be measurable, not assumed.

What to Look for in AI-Based Security Tools

As AI adoption accelerates, the cybersecurity market is exploding with “AI-powered” tools. But not all AI security solutions are created equal. Some merely add a layer of machine learning on top of old systems, others are built from the ground up to protect AI models, pipelines, and data at enterprise scale.

Choosing the right platform means knowing what truly matters.

1. Contextual Risk Correlation

AI security tools must do more than detect isolated anomalies they must understand the context behind every event.

For instance:

- Is a flagged login attempt part of a larger credential stuffing campaign?

- Is a strange API call an indicator of an ongoing data exfiltration attempt?

Context-aware tools use graph-based machine learning and attack path modeling to connect dots across users, assets, and networks. This turns millions of raw alerts into a few actionable insights critical for efficiency and accuracy.

2. Automated Attack Path Detection

Modern attackers don’t just exploit one vulnerability they chain multiple weaknesses to reach critical assets.

That’s why top-tier AI security systems should include automated attack path analysis:

- Mapping lateral movement across your hybrid environment.

- Simulating “what-if” breach scenarios in real time.

- Highlighting high-impact vulnerabilities that lead directly to your AI systems or sensitive data.

This is where AI-driven simulation meets risk intelligence, helping your security team predict and block attack routes before they’re exploited.

3. Continuous AI Model Monitoring

AI systems don’t stop learning once they’re deployed and neither do attackers. That’s why continuous monitoring is critical to detect:

- Model drift: when predictions deviate from baseline accuracy.

- Data drift: when new data behaves differently from training data.

- Behavioral anomalies: such as unexpected prompt responses, hallucinations, or data leakage.

Advanced tools provide model observability dashboards, similar to DevOps monitoring, but focused on trust, fairness, and security metrics. They also log every inference and user prompt, enabling audit trails for compliance and investigation.

4. LLM and AI Model Discovery

“Shadow AI” unauthorized or unmonitored AI use inside organizations has become one of the biggest emerging risks.

Employees integrate LLMs or third-party AI APIs into workflows without oversight, exposing sensitive data in the process.

AI security tools should automatically discover and inventory all AI assets in use:

- Internal models

- Third-party APIs

- Embedding databases

- Prompt logs and vector stores

This provides visibility, ensuring compliance teams know where AI lives, what it accesses, and how it’s being used.

5. Risk-Based Prioritization

No organization can fix every vulnerability at once. The right AI security solution helps you prioritize by impact, combining technical risk (vulnerability severity) with business risk (asset criticality).

Tools that provide risk-based scoring allow leaders to make data-driven decisions about:

- Which vulnerabilities to patch first.

- Which model behaviors to retrain.

- Which alerts should be escalated immediately?

Risk prioritization transforms AI security from reactive firefighting to strategic resource allocation.

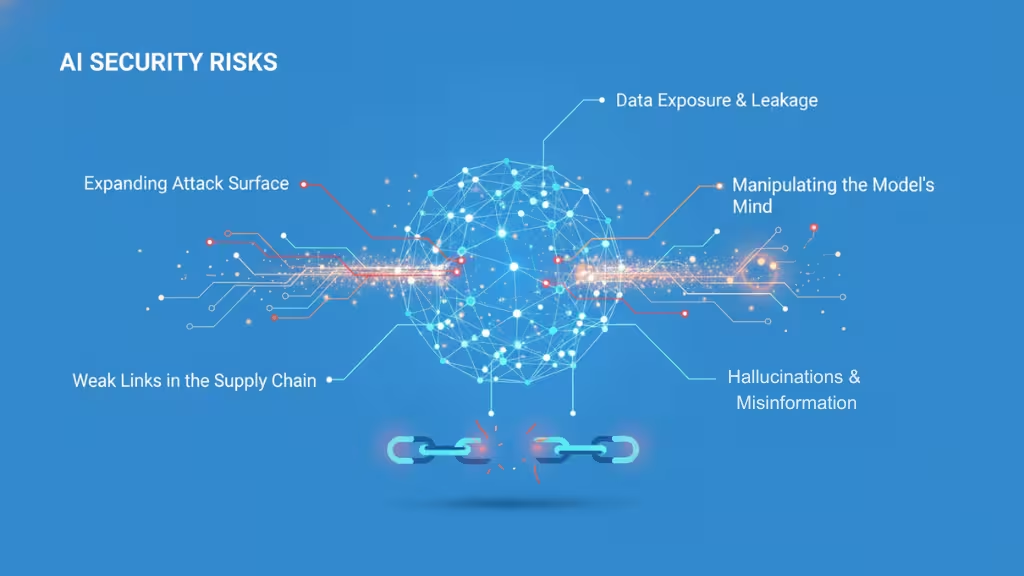

AI Security Risks

Artificial intelligence doesn’t just expand what’s possible it expands what’s vulnerable. As organizations integrate AI into every layer of their operations, the potential for exploitation grows in unexpected directions. The result is a new generation of security risks that traditional cybersecurity tools were never designed to handle.

The Expanding Attack Surface

AI connects to everything: data sources, APIs, models, and cloud services. That interconnectedness makes it powerful and dangerously exposed. Unlike traditional systems with defined perimeters, AI environments create a fluid web of inputs and dependencies where a single compromised dataset or model checkpoint can cascade into enterprise-wide risk.

In essence, your data has become your perimeter and once it’s breached, so is your AI.

Data Exposure and Leakage

AI models consume massive datasets, often containing sensitive or proprietary information. When not properly governed, these models can unintentionally memorize and reveal private data. Researchers have already shown that large language models can be prompted to disclose training data, including customer details and internal code snippets. For regulated industries, that’s more than a privacy concern it’s a compliance and trust issue that can trigger legal and reputational fallout.

Manipulating the Model’s Mind

Attackers are no longer breaking into networks; they’re breaking into the model’s reasoning. Techniques like data poisoning subtly alter training data so the AI learns false or biased patterns. Others exploit prompt injections, embedding malicious instructions that override a model’s behavior or cause it to leak secrets. Some of these prompts are direct, typed by users; others are indirect, hidden in webpages or documents that AI tools later access. The result is the same: a model that can be tricked into acting against its intended purpose without leaving a trace.

Hallucinations and Misinformation

AI hallucinations when models fabricate information are often dismissed as harmless quirks. But in business or security contexts, hallucinations can be weaponized. A manipulated model might generate misleading threat reports, fake legal summaries, or false alerts that waste resources. Worse, public-facing AIs can spread misinformation that damages brand credibility or influences markets. Security is no longer just about preventing breaches; it’s about ensuring truthfulness and reliability in what AI says and does.

Weak Links in the AI Supply Chain

Every AI system depends on a chain of components open-source libraries, pre-trained models, datasets, APIs, and infrastructure. Each link in that chain can be compromised. Malicious actors have already slipped infected Python packages, tampered model weights, and poisoned datasets into public repositories. Without strict validation, these vulnerabilities can introduce backdoors at the algorithmic level, invisible to standard cybersecurity tools.

The Business Fallout

AI security failures aren’t just technical, they’re strategic. A compromised model can erode customer trust, expose sensitive data, or produce decisions that lead to legal liability. Data breaches now carry higher financial and regulatory stakes, and model manipulation can quietly sabotage years of brand reputation.

Why Is the Security of AI Important?

AI systems are now central to everyday services, from AI assistants and chatbots to advanced analytics in finance or healthcare. Their popularity also makes them prime targets for malicious actors who want to steal data, disrupt services, or damage reputations. To circumvent this threat, AI developers are consistently working to safeguard AI systems from these threats and maintain consumer confidence.

We’ve all seen global news reports of data breaches, where customer information (such as addresses, passport numbers, or driver’s license details) is hacked, ransomed, and sometimes leaked. Although these cyber attacks aren’t exclusively AI attacks, they demonstrate how even secure companies with multilayered protection can fall victim to sophisticated attacks.

Technological advancement is a double-edged sword: Even as AI systems improve, threat actors are becoming more adept at exploiting vulnerable systems, potentially impacting any AI processes that rely on cloud environments.

For example, imagine if an AI application at a major Australian bank or in a national healthcare database was specifically targeted. The consequences would be even more severe if those AI systems and their cloud security were compromised. By focusing equally on using AI for security (faster threat detection, smarter defences) and securing AI systems (preventing adversarial attacks, safeguarding data), you can keep services running smoothly, protect customer information, and maintain trust in AI-driven solutions.

There’s also the issue of compliance. As governments catch up and implement data governance regulations to reign in the AI Wild West, AI security will play a major, mandated role in preventing bad actors from harming others. The guiding principle here is about ensuring AI is a force for good for society through effective security measures.

Benefits of AI Security

AI security isn’t just a defense mechanism; it’s an investment multiplier. When implemented properly, it enhances protection, reduces costs, accelerates operations, and increases stakeholder confidence.

In other words, AI security transforms risk into resilience, turning complex, unpredictable systems into trusted, high-performing assets.

Below are the key benefits organizations realize when they integrate AI security into their broader digital strategy.

1. Enhanced Threat Detection

Traditional tools rely on signatures and known attack patterns. AI security adds adaptive intelligence learning from new data, detecting subtle anomalies, and flagging threats that evade rule-based systems.

For example:

- Detecting zero-day exploits through pattern deviation.

- Identifying insider threats from unusual data access behavior.

- Flagging AI model tampering before damage occurs.

A well-secured AI system acts as both sensor and shield, expanding visibility across your infrastructure while protecting itself.

2. Faster Incident Response

Speed matters. According to IBM’s Cost of a Data Breach Report 2025, AI-driven detection and automation can reduce Mean Time to Response (MTTR) by up to 70%.

AI security systems not only detect anomalies they act on them:

- Automatically quarantining affected systems.

- Revoking compromised credentials.

- Blocking malicious IPs or API requests in real time.

This level of automation transforms incident response from manual firefighting into continuous, adaptive containment.

3. Greater Operational Efficiency

Security teams are overloaded with thousands of alerts, limited staff, and constant fatigue. AI security streamlines this chaos by automating repetitive analysis and prioritizing alerts based on risk scores.

That means:

- Analysts spend time on high-value investigation, not noise.

- Security operations centers (SOCs) can scale without hiring sprees.

- Costs drop while coverage improves.

Result: A leaner, smarter, more responsive security operation that grows with your business.

4. A Proactive Approach to Cybersecurity

AI security allows organizations to predict and prevent attacks not just react to them. By analyzing historical patterns and simulating threat scenarios, AI systems can:

- Identify potential weak points before exploitation.

- Forecast high-risk attack paths.

- Recommend security improvements automatically.

This predictive capability marks the evolution from defensive cybersecurity to intelligent cyber resilience.

5. Understanding Emerging Threats

Because AI models continuously learn, they detect not just known threats but also new attack behaviors as they evolve.

This capability helps organizations stay ahead of:

- AI-generated phishing campaigns.

- Automated malware and bot attacks.

- Generative AI misuse for fraud or misinformation.

It’s a constant arms race between intelligent attackers and intelligent defenders and AI security ensures you’re on the winning side.

6. Improved User and Customer Experience

Secure AI systems earn trust. When users know their data and interactions are protected, they engage more confidently leading to stronger brand loyalty and adoption.

Examples:

- Customers trust your chatbot to handle payments safely.

- Employees use internal AI tools without fear of data leaks.

- Partners integrate with your AI APIs knowing they’re compliant and monitored.

Security and experience are no longer opposites, they’re complements.

7. Automated Regulatory Compliance

AI security tools help automate compliance with evolving global standards, like:

- GDPR, HIPAA, and CCPA for data protection.

- EU AI Act, ISO/IEC 42001, and NIST AI RMF for AI governance.

They generate audit logs, monitor data lineage, and validate model integrity, reducing manual reporting and compliance overhead.

8. Ability to Scale Securely

Without proper security, scaling AI means scaling risk. AI security frameworks allow enterprises to expand confidently across more data, models, and users without compromising control.

This ensures:

- Consistent enforcement of policies across all AI systems.

- Unified monitoring from pilot projects to enterprise-wide deployments.

- A culture of secure innovation, not fear-driven limitation.

Secure scale = sustainable growth.

Top AI Security Challenges

Despite its advantages, implementing AI security isn’t easy. Most organizations face a steep learning curve, tangled toolsets, and gaps between data science, security, and compliance teams.

The result? AI adoption outpaces AI protection, leaving systems intelligent, but exposed.

Here are the key challenges businesses must overcome to build a secure and scalable AI ecosystem.

1. Lack of AI Security Expertise

AI security is a relatively new field sitting at the crossroads of machine learning, cybersecurity, and governance. Unfortunately, most organizations have experts in one of those areas, but rarely in all three.

- Data scientists may not understand adversarial threats.

- Security teams may not grasp how models learn or drift.

- Compliance teams may not have visibility into the technical risks.

This skill gap leads to misconfigured systems, unchecked model behaviors, and inconsistent controls.

How to overcome it:

- Build cross-functional AI security teams that include data engineers, SOC analysts, and governance officers.

- Provide training in emerging domains like adversarial ML, prompt security, and AI risk management.

- Partner with vendors or universities offering AI security certifications and red-teaming programs.

AI security isn’t a tool problem, it’s a talent problem first.

2. Shadow AI and Lack of Visibility

The rise of “shadow AI” unauthorized or unmonitored AI use inside organizations, is one of the fastest-growing risks in 2025. Employees connect AI tools, APIs, and chatbots to internal systems without oversight, often exposing sensitive data unknowingly.

Common examples:

- A marketing team uploads customer data into ChatGPT for content analysis.

- A developer fine-tunes an LLM using unapproved datasets.

- A business unit deploys a GenAI agent without data governance.

Each of these creates a blind spot for IT and compliance.

Without discovery tools and centralized monitoring, leaders can’t answer:

- Which AI models are in use?

- Where is data flowing?

- Who has access, and what’s being stored?

How to overcome it:

- Deploy AI model discovery tools that inventory all internal and external AI usage.

- Implement zero-trust principles for all AI endpoints; every prompt, model, and connection must be verified.

- Create clear AI usage policies that balance flexibility with control.

You can’t secure what you can’t see. Visibility is step one in AI risk management.

3. Reliance on Traditional Security Tools

Most enterprises still depend on conventional firewalls, antivirus software, and network monitoring systems. While these tools protect infrastructure, they don’t understand AI behavior, things like data drift, adversarial input, or model theft.

In short, legacy tools secure the system but not the intelligence running on it.

Example:

A traditional EDR tool might detect unauthorized file access, but it won’t notice if a model suddenly starts leaking training data through its outputs.

How to overcome it:

- Integrate AI-aware security solutions capable of monitoring model behavior, prompts, and inference patterns.

- Incorporate model integrity checks, drift detection, and prompt filtering into your security stack.

- Treat models like living assets that require continuous protection not static code.

Traditional cybersecurity guards your walls; AI security guards your brain.

4. Data Quality and Provenance Risks

AI’s intelligence is only as strong as its data. If data sources are unverified, outdated, or biased, your security system inherits those flaws amplifying both false alarms and missed detections.

Many breaches begin not with malicious intent, but with dirty or incomplete data used during training or monitoring.

How to overcome it:

- Establish data lineage tracking and enforce source verification for every dataset.

- Use hashing and cryptographic signatures to ensure data integrity.

- Regularly retrain models on curated, validated datasets to maintain trustworthiness.

5. Fragmented Governance and Accountability

Even with advanced tools, security can fail if no one owns it. In many enterprises, AI risk is spread thinly across IT, compliance, and operations creating accountability vacuums.

How to overcome it:

- Assign a Chief AI Security Officer (CAISO) or designate clear ownership for AI governance.

- Use standardized frameworks (e.g., NIST AI RMF, ISO 42001, AI TRiSM) to align teams and measure maturity.

- Make AI security part of board-level reporting not buried in IT updates.

Governance turns AI security from a technical fix into an organizational capability.

How Can You Implement AI Security in Your Business?

Building AI security into your organization isn’t just about buying new tools it’s about creating a secure-by-design culture around how AI is built, deployed, and maintained.

Here’s a practical roadmap to get started whether you’re deploying your first AI model or managing enterprise-scale AI systems across multiple teams.

Step 1: Assess Your Risk and Compliance Requirements

Every organization’s AI risk profile is unique.

Start by conducting a comprehensive AI risk assessment that maps out:

- What AI systems you currently use (or plan to use).

- What data they rely on.

- Which regulations or frameworks apply (e.g., GDPR, EU AI Act, ISO/IEC 42001, NIST AI RMF).

Ask key questions like:

- What would happen if this model was compromised or misused?

- What’s the potential impact on data privacy, compliance, or brand trust?

- Who’s accountable for the security and governance of this AI system?

Once the risks are clear, align your AI security strategy with your existing cybersecurity and compliance programs.

Step 2: Secure Your Data Pipelines

Data is both AI’s fuel and its biggest vulnerability. A single poisoned or corrupted dataset can undermine even the most advanced model.

Protect your AI data lifecycle by:

- Verifying data provenance use checksums, version control, and source authentication.

- Encrypting data in transit and at rest.

- Anonymizing and masking sensitive information before training.

- Filtering malicious or mislabeled samples to prevent data poisoning.

- Implementing Data Loss Prevention (DLP) for AI-related storage and logs.

Treat your data pipeline as part of your security perimeter. When data integrity is guaranteed, model integrity follows.

Step 3: Establish Data Access Control

Not everyone should have access to AI models or datasets and even fewer should be able to modify them.

Build Zero Trust principles into your AI environment:

- Use role-based access control (RBAC) for data, models, and APIs.

- Implement multi-factor authentication (MFA) for all AI-related systems.

- Segment development, testing, and production environments to contain exposure.

- Log every access, modification, or model interaction for auditability.

For LLMs and generative AI systems, deploy prompt filtering and output monitoring to prevent sensitive data exfiltration.

Step 4: Automate Threat Detection and Response

Manual security processes can’t keep up with the scale and speed of AI.

That’s why automation is essential especially for monitoring and response.

Integrate AI-driven security automation tools that can:

- Continuously monitor models and pipelines for anomalies.

- Detect prompt injection, adversarial behavior, or unusual data flow.

- Automatically quarantine compromised assets or block malicious requests.

- Feed findings back into your security information and event management (SIEM) system.

Pair automation with human oversight so your analysts can validate and improve detection logic over time. This “human-in-the-loop” model ensures AI security remains both efficient and accountable.

Step 5: Train Your Teams and Update Regularly

AI security isn’t static it’s a living process that evolves with every new dataset, model, and threat. To stay ahead, you must continuously educate, update, and evaluate.

Best practices include:

- Conduct AI security awareness programs across teams.

- Schedule red-teaming exercises to simulate AI-specific attacks (prompt injection, model theft, data poisoning).

- Periodically retrain and fine-tune models to adapt to new threats.

- Review and refresh your AI security policies every quarter.

Remember, security maturity grows over time, and your people are as important as your technology.

AI Security Best Practices

AI security isn’t a one-time setup it’s a continuous discipline. Just as AI systems learn and evolve, so must their defenses. The organizations that stay secure are those that treat AI security as an ongoing process, not a project.

Below are the key best practices that turn AI systems from vulnerable assets into resilient, trustworthy intelligence engines.

1. Secure Data Collection and Transfer

The integrity of an AI system begins at the data source. If data is tampered with during collection or transmission, the resulting model is already compromised.

Best practices:

- Collect data only from verified, trusted, and documented sources.

- Encrypt data at every stage in transit (TLS/SSL), at rest, and during preprocessing.

- Use data hashing and digital signatures to verify that datasets haven’t been altered.

- Employ data validation layers that detect outliers or anomalies before ingestion.

2. Control Access at Every Step

AI pipelines have many moving parts: data scientists, MLOps engineers, developers, and external APIs. Every connection and credential introduces potential risk.

Best practices:

- Apply role-based access control (RBAC) for data, models, and APIs.

- Use least-privilege principles to only grant access necessary for the task.

- Implement continuous authentication and key rotation for all AI services.

- Segment networks to separate model training, inference, and management environments.

Access control isn’t bureaucracy; it’s proactive containment of potential breaches.

3. Use Threat Simulations and Red-Teaming

Don’t wait for attackers to find your weaknesses and test them yourself. AI-specific red-teaming exercises simulate how adversaries might exploit models, data pipelines, or LLM prompts.

Techniques include:

- Adversarial input testing: Crafting inputs to test model robustness.

- Prompt injection simulations: Checking if LLMs can be tricked into violating rules.

- Data poisoning scenarios: Assessing how the system reacts to corrupted samples.

Regular threat simulations not only strengthen defenses but also train teams to think offensively, an invaluable skill in AI-driven security.

4. Be Proactive, Not Reactive

Most cyber defenses fail because they rely on alerts after the fact. AI security must anticipate threats, not just detect them.

Best practices:

- Monitor for model drift and data anomalies continuously.

- Deploy predictive analytics to identify emerging risks early.

- Use risk-based prioritization to focus on vulnerabilities with the highest impact.

- Establish incident playbooks for likely AI-specific threats (prompt injection, model theft, etc.).

Proactive security shifts your stance from firefighting to foresight protecting not just your data, but your decisions.

5. Update and Retune Models Regularly

AI models degrade over time. Their data becomes outdated, patterns evolve, and performance drifts a phenomenon called model decay.

Unmaintained models not only lose accuracy but can also become security liabilities. For example, outdated fraud detection models may miss new attack methods entirely.

Best practices:

- Schedule periodic retraining with fresh, validated datasets.

- Monitor performance metrics and accuracy deviations.

- Validate all retrained models before deployment.

- Automate the update process through CI/CD pipelines with security gating.

Security that doesn’t evolve becomes a vulnerability itself.

6. Use AI Responsibly

Responsible AI and secure AI are two sides of the same coin. A secure system that behaves unethically is just as damaging as an insecure one.

Best practices:

- Embed ethical guidelines in model design (fairness, transparency, human oversight).

- Track decision provenance ensures every model output is explainable and traceable.

- Regularly assess for bias, misuse, and data leakage.

- Combine security monitoring with AI governance frameworks (e.g., NIST AI RMF, AI TRiSM).

Conclusion

The rise of AI has redefined what it means to be secure. It’s no longer enough to protect networks or endpoints; organizations must now safeguard the intelligence that drives every decision. AI security sits at the heart of that mission, blending technology, governance, and human oversight to ensure systems remain trustworthy and resilient.

By embedding AI security into every stage of development and deployment, businesses don’t just defend against emerging threats they build the foundation for responsible innovation. In the years ahead, those who treat AI security as a strategic priority will lead not only in protection, but in progress.

FAQs About AI Security

Can small businesses benefit from AI security?

Yes. AI security tools help small businesses detect threats, protect sensitive data, and ensure compliance without needing large security teams.

Does using AI for security replace human security teams?

No. AI automates detection and response, but human oversight is essential for judgment, strategy, and validation.

How often should you retrain models for security purposes?

Retrain models every 3–6 months or whenever data, threats, or behaviors significantly change.

Is AI security only relevant for tech-heavy industries?

No. Any business using AI for data, automation, or decision-making needs AI security to prevent misuse or data leaks.

What is an AI security posture, and why does it matter?

AI security posture measures how well your organization protects AI systems, data, and pipelines. A strong posture means lower risk and higher trust.

This page was last edited on 2 December 2025, at 4:57 pm

Start a conversation with our team to solve complex challenges and move forward with confidence.