As artificial intelligence continues to reshape industries, ethical ai implementation for businesses has become a critical priority rather than an option. While AI offers powerful opportunities for efficiency, innovation, and growth, it also introduces risks related to bias, compliance, and trust that organizations cannot afford to overlook.

This guide turns theory into action by outlining practical steps and proven frameworks for ethical ai implementation for businesses. With clear strategies and real-world insights, you will learn how to reduce risk, ensure compliance, and build AI systems that are not only effective but also responsible and trustworthy.

What Is Ethical AI Implementation in Business?

Ethical AI implementation in business means designing, deploying, and managing AI systems in ways that are fair, transparent, accountable, and compliant with legal and societal expectations.

Ethical AI goes beyond simply making AI work—it ensures that AI systems uphold principles like fairness (avoiding bias), transparency (how decisions are made), privacy (protecting data), and accountability (assigning responsibility). These values not only safeguard against harm but also differentiate ethical AI adoption from general AI deployment by focusing on responsible impact. Ethical AI is closely linked to governance, evolving regulation, and societal trust, anchoring your business’s credibility in today’s ecosystem.

Why Should Businesses Prioritize Ethical AI?

Prioritizing ethical AI helps businesses avoid legal penalties, reduce reputational risk, build stakeholder trust, and unlock competitive advantages.

- Legal compliance: Avoid fines and sanctions under regulations like the EU AI Act and sector-specific rules.

- Brand trust: Foster credibility with customers, partners, and the public.

- Regulatory preparedness: Stay ahead of evolving expectations from global bodies (EU, OECD).

- Risk mitigation: Prevent operational failures, costly recalls, or discrimination claims.

- Long-term ROI: Support sustainable innovation and future-proof competitiveness.

Organizations that implement ethical AI sooner gain a decisive advantage—reinforcing customer loyalty, streamlining compliance, and driving responsible growth.

What Are the Risks of Unethical AI in Business Use?

Unethical AI puts businesses at risk of regulatory fines, customer backlash, data breaches, and operational failures.

| Risk | Example | Mitigation Approach |

|---|---|---|

| Data/Algorithmic Bias | AI recruiter favoring certain demographics | Audit data; bias-mitigation algorithms |

| Privacy Violations | Misuse of sensitive customer data | Privacy-by-design; robust access controls |

| Regulatory Fines | Non-compliance with EU AI Act, GDPR | Regulatory assessments; documentation |

| Loss of Trust/Public Backlash | AI errors causing negative headlines or complaints | Transparent reporting; stakeholder engagement |

| Operational Failures | Inaccurate AI decisions leading to losses | Ongoing monitoring; human-in-the-loop |

Real-world lessons show ignoring ethics can be costly: In the US, a major financial institution faced investigation after its credit algorithm unfairly favored certain applicants; meanwhile, an HR tech company’s opaque AI assessment tool led to legal scrutiny and damaged their brand.

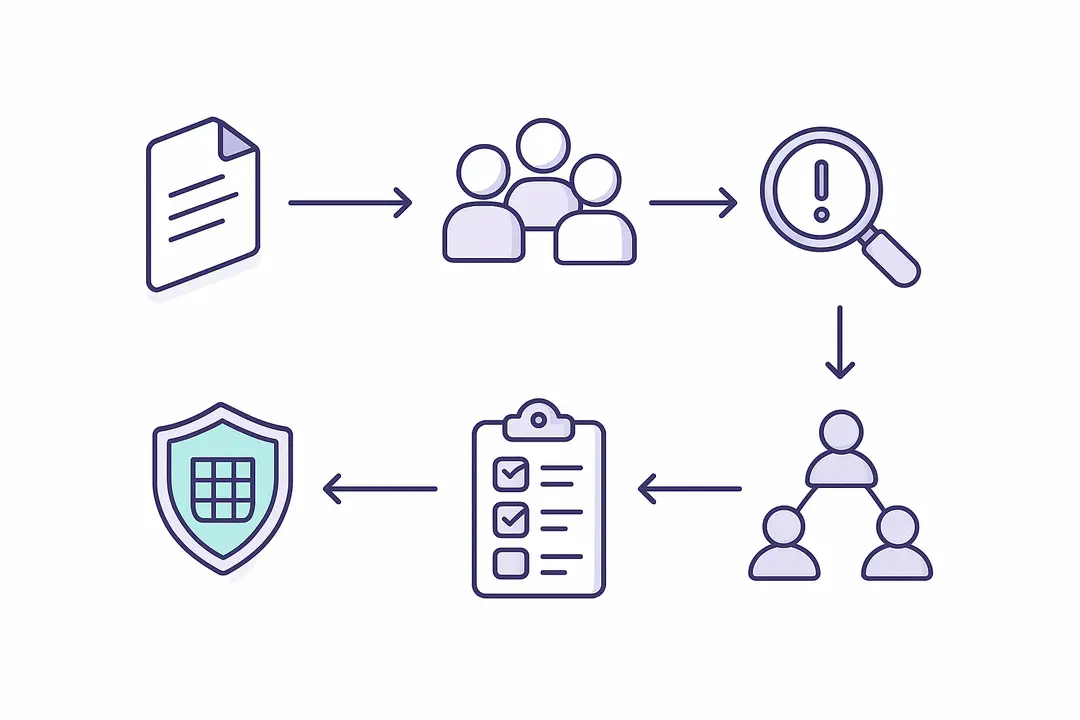

How to Approach Ethical AI Implementation for Businesses: A Step-by-Step Framework

Implementing ethical AI in business requires a structured framework that includes policy development, team formation, risk assessment, data management, ongoing governance, and active stakeholder engagement.

To guide your organization, follow these six essential steps:

- Build an AI Ethics Policy

- Assemble an Interdisciplinary AI Ethics Team

- Conduct an Ethical Risk Assessment for AI Projects

- Ensure Data Quality, Fairness & Privacy in AI Systems

- Set Up Ongoing AI Governance & Audit Processes

- Engage Stakeholders & Communicate AI Impact

Below, each step is detailed with action points, best practices, and downloadable aids.

Step 1: Build an AI Ethics Policy for Your Organization

Start by defining your organization’s ethical standards for AI, setting a foundation for responsible technology use.

Key actions:

- Draft clear ethical AI principles. Reference established frameworks like the OECD AI Principles and the EU AI Act to anchor your policy.

- Include specific clauses, such as:

- Commitment to bias reduction and fairness.

- Requirements for transparency and explainability.

- Policies on data privacy and consent.

- Mechanisms for accountability and oversight.

- Make your ethics policy actionable by setting out decision rights, escalation paths, and compliance checklists.

Download: “AI Ethics Policy Template” (PDF)

Step 2: Assemble an Interdisciplinary AI Ethics Team

An effective AI ethics program requires diverse perspectives—from technical experts to legal, HR, and frontline staff.

Action steps:

- Identify key roles: AI developers, data scientists, compliance/legal officers, HR, product managers, and representatives from impacted communities.

- Establish an AI Ethics Advisory Board to formalize review and oversight.

- Tailor team size/structure to your organization: SMEs may combine roles; enterprises can develop specialized committees.

| Team Role | Responsibility |

|---|---|

| Technical Lead | Model development, bias checks |

| Legal/Compliance | Regulatory review, documentation |

| HR | Ethics training, employee input |

| Business Unit Lead | Process alignment, adoption |

| Community Rep | User/impacted group voice |

Step 3: Conduct an Ethical Risk Assessment for AI Projects

Systematically assess ethical risks for each AI initiative to prevent harm before deployment.

Action steps:

- Set up a risk review process: Regularly review all AI projects, especially those impacting customers or employees.

- Identify risk sources: Check for bias in data, unintended consequences in algorithms, or deployment in sensitive contexts.

- Use a risk assessment matrix, rating likelihood and impact, to prioritize mitigation.

Example Risk Matrix:

| Risk Area | Likelihood | Impact | Mitigation |

|---|---|---|---|

| Data Bias | High | High | Data audit, retrain |

| Privacy Breach | Medium | High | Encryption, consent |

| Lack of Explain. | Low | Medium | Deploy XAI tools |

Step 4: Ensure Data Quality, Fairness & Privacy in AI Systems

High-quality, unbiased, and secure data is foundational for ethical AI.

Best practices:

- Audit data for historical and sampling bias, both before model training and after deployment (to detect drift).

- Deploy privacy-by-design measures: Limit data collection, anonymize information, and rigorously protect personally identifiable information (PII).

- Implement explainable AI (XAI) tools to clarify model decisions to stakeholders.

Data Quality Checklist:

- Bias checks passed

- Data privacy controls in place

- Regular audits scheduled

- Explainability/reporting tools enabled

Step 5: Set Up Ongoing AI Governance & Audit Processes

Continuous governance and auditing ensure AI systems remain ethical and compliant as business and regulations evolve.

Implement these processes:

- Define what and how to audit: Include performance, fairness, and privacy metrics.

- Set monitoring frequency: Quarterly or after major updates/deployments.

- Document findings: Link audit outcomes to business KPIs and compliance records.

- Adapt and improve policies based on audit insights; choose audit tools aligning with your sector needs.

Step 6: Engage Stakeholders & Communicate AI Impact

Ongoing engagement and clear communication are key to building trust and ensuring your AI systems serve all stakeholders.

Action points:

- Notify customers and users when AI is in use and how it impacts decisions.

- Secure buy-in from staff through training, involvement in review boards, and transparent communication.

- Establish complaint and appeals channels: Enable affected parties to challenge or query AI decisions.

- Invest in continuous employee education on responsible AI practices.

Stakeholder Engagement Checklist:

- Users notified of AI use

- Staff training conducted

- Feedback/appeals mechanism active

What Are the Key Regulations for Ethical AI in Business?

Regulations like the EU AI Act and the OECD’s AI principles set out mandatory requirements for ethical AI in business, with additional sector-specific rules in finance, healthcare, and other industries.

Key frameworks:

- OECD AI Principles: Global standards for transparency, robustness, and human-centric design. (OECD AI Principles)

- EU AI Act: The most comprehensive, binding regulation to date, requiring risk classification, transparency obligations, and documentation for certain AI systems. (EU AI Act)

- Sector requirements: Finance (e.g., algorithmic trading), healthcare (e.g., patient data protections).

| Regulation | Jurisdiction | Focus | Applicability |

|---|---|---|---|

| OECD Principles | Global | General principles | Voluntary (strongly advised) |

| EU AI Act | EU | Risk, transparency | Mandatory for many uses |

| US Guidelines | US | Fairness, privacy | Federal/State-level; evolving |

Accountability typically falls to compliance officers, CDOs, or dedicated AI ethics leads.

Real-World Examples: Ethical AI in Action

- Enterprise Success: A major European bank implemented automated loan approvals. By conducting extensive bias audits and adjusting models based on audit findings, they increased approval rates for underrepresented applicants without increasing risk, earning positive public coverage and preempting regulatory issues.

- SME Quick Win: A regional HR firm leveraged an “AI Ethics Policy” checklist and open-source explainability tools to pilot their candidate screening system. This allowed quick implementation without significant new hires or costs.

- Cautionary Tale: A global retailer launched a predictive hiring AI without transparent communication. When the system’s decisions were challenged and found to reflect historical bias, the company faced negative press, regulatory probes, and ultimately withdrew the tool.

As Professor Sandra Wachter of Oxford Internet Institute notes, “Embedding ethics from the start is not only a regulatory imperative—it’s a business differentiator.”

How Can Businesses Build a Culture of Ethical AI? (Training & Change Management)

Building a culture of ethical AI requires ongoing training, internal advocacy, and leadership support to embed responsible AI use into your company DNA.

Quick tips:

- Prioritize AI ethics training for all staff, not just technical teams.

- Put HR in charge of onboarding and continuous learning on AI risks and responsibilities.

- Establish feedback loops so employees can raise concerns and share best practices.

- Showcase ethical wins and lessons learned to normalize responsible behavior.

Organizations that make ethics top-of-mind at every level see higher stakeholder trust and smoother change management as AI evolves.

Ethical AI Implementation: Summary Table & Key Takeaways

Below is a one-page reference for your ethical AI journey:

| Pillar Step | Outcome |

|---|---|

| 1. AI Ethics Policy | Clear principles and compliance |

| 2. Interdisciplinary Ethics Team | Robust oversight, accountability |

| 3. Ethical Risk Assessment | Minimized harm, regulatory readiness |

| 4. Data Quality & Privacy Controls | Bias mitigation, legal protection |

| 5. Governance & Audit | Ongoing transparency, improvement |

| 6. Stakeholder Engagement | Trust, reduced exposure |

Ethical AI Do/Don’t Checklist

- Do: Formalize policy, audit systems, engage diverse teams, communicate openly.

- Don’t: Rush deployment without review, ignore user feedback, overlook documentation.

Frequently Asked Questions: Ethical AI for Businesses

What steps are involved in ethical ai implementation for businesses?

Effective ethical ai implementation for businesses includes creating an AI ethics policy, forming a cross functional team, assessing risks, ensuring data fairness, and applying strong ai governance and compliance strategies.

Why is ai governance and compliance strategies important in ethical ai implementation for businesses?

AI governance and compliance strategies are essential for ethical ai implementation for businesses as they ensure accountability, reduce risks, and align AI systems with legal and ethical standards.

How can responsible ai practices for businesses reduce bias in AI systems?

Responsible ai practices for businesses help reduce bias by auditing datasets, using bias detection tools, involving diverse teams, and continuously monitoring outcomes as part of ethical ai implementation for businesses.

What regulations impact ethical ai implementation for businesses?

Regulations like the EU AI Act and OECD guidelines shape ethical ai implementation for businesses. These frameworks support ai governance and compliance strategies to ensure safe and lawful AI use.

What is an AI ethics board in responsible ai practices for businesses?

An AI ethics board is a key part of responsible ai practices for businesses, consisting of experts who oversee and guide ethical ai implementation for businesses across technical and legal areas.

How do businesses ensure transparency in ethical ai implementation for businesses?

Transparency in ethical ai implementation for businesses involves explainable AI tools, clear documentation, and open communication. These are core elements of strong ai governance and compliance strategies.

Is ethical ai implementation for businesses legally required?

In some regions, ethical ai implementation for businesses is legally required, while in others it is a best practice. Strong responsible ai practices for businesses help organizations stay ahead of regulations.

What are the risks of ignoring responsible ai practices for businesses?

Ignoring responsible ai practices for businesses can lead to compliance issues, reputational damage, and legal penalties. Proper ethical ai implementation for businesses helps mitigate these risks.

Can small companies adopt ethical ai implementation for businesses effectively?

Yes, ethical ai implementation for businesses can be scaled for smaller organizations. Adopting basic ai governance and compliance strategies and responsible practices can still deliver strong results.

What is explainable AI in the context of ethical ai implementation for businesses?

Explainable AI is a key part of ethical ai implementation for businesses, ensuring decisions are understandable and transparent. It supports responsible ai practices for businesses and builds trust.

How do ai governance and compliance strategies improve business outcomes?

Strong ai governance and compliance strategies enhance reliability, reduce risk, and support sustainable growth. They are essential for successful ethical ai implementation for businesses.

Why are responsible ai practices for businesses becoming a priority?

Responsible ai practices for businesses are gaining importance due to increasing regulations and user expectations. They play a critical role in ethical ai implementation for businesses and long term success.

Conclusion: The Business Imperative for Ethical AI

Ethical AI implementation for businesses is no longer optional but a critical foundation for sustainable growth, trust, and long term success. Organizations that prioritize responsible AI practices can unlock innovation while protecting their reputation and ensuring compliance.

By following a structured approach and embedding ethics into every stage of AI development, businesses can confidently adopt AI technologies without unnecessary risk. The focus should not only be on what AI can do, but on how it is built, used, and governed to create meaningful and responsible outcomes.

Key Takeaways

- A structured ethical AI framework protects your business and unlocks value.

- Proactive governance, risk assessment, and stakeholder communication are critical.

- Regulations like the EU AI Act set clear standards for business AI compliance.

- Both SMEs and enterprises can implement responsible AI using available resources.

- Embedding ethical AI into company culture ensures long-term adaptability and trust.

This page was last edited on 3 April 2026, at 10:41 am

Contact Us Now

Contact Us Now

Start a conversation with our team to solve complex challenges and move forward with confidence.